Hum… Another week just flew by… 🫣 How does time move so fast! This week was split into 3 main chunks:

- Tutoring undergraduate year project

- My TA duties for lab

- Camera calibration

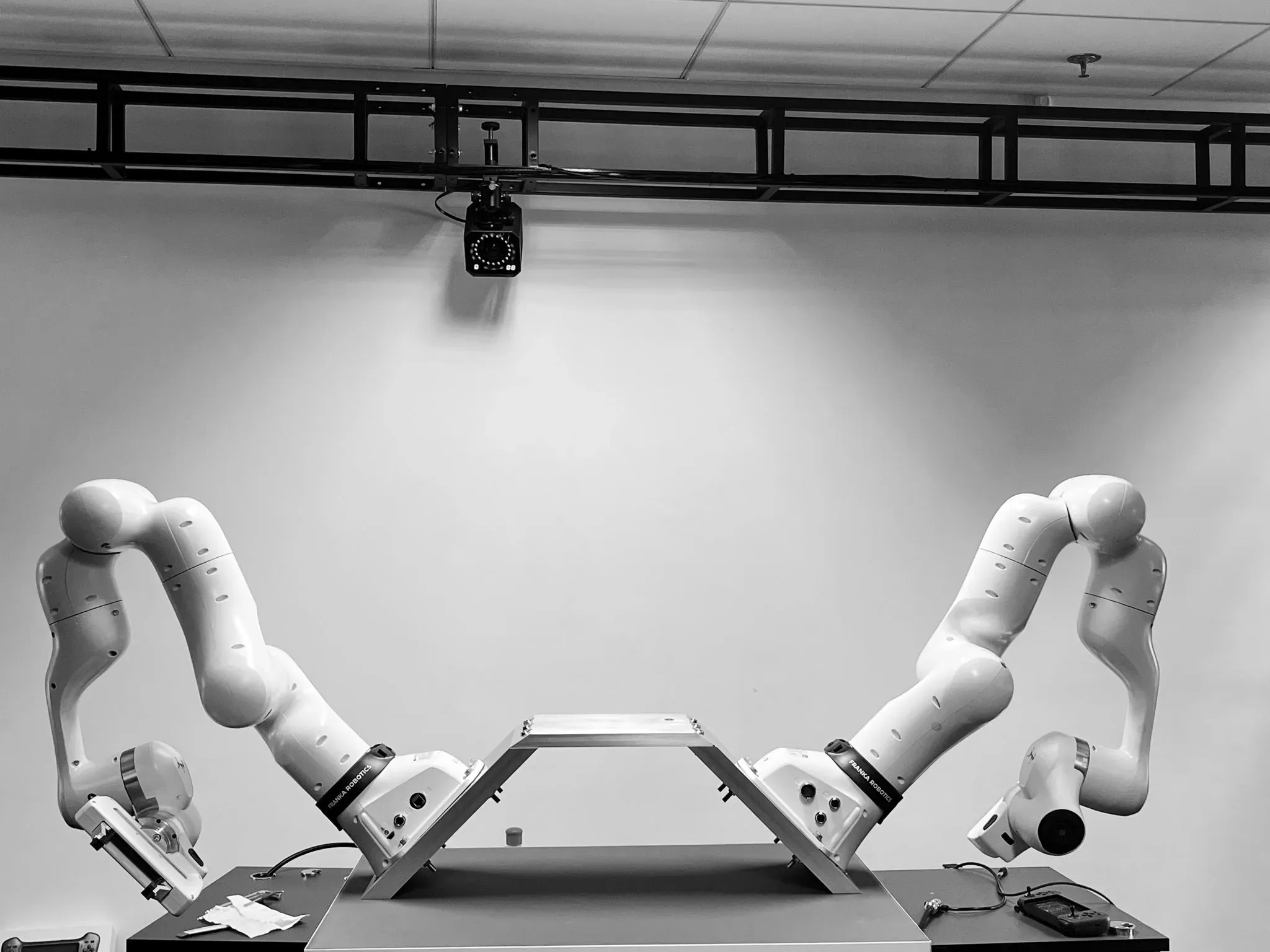

Bimanual Setup

I learned how to use Franka Research 3’s web API to mount the robot at a different angle, specifically, tilted with a 45-degree yaw instead of flat on a tabletop. A how-to-guide is coming soon!

Setting this angle allows the operational space control of the two robots to mimic human arms much more naturally.

Grasp API Headaches

The grasp() API cost me two whole days! Holly cow!

The issue looked like this: I would ask the robot to grasp a cube, and it would just slip right out of the gripper. I thought my setup was robust, but it turns out I made a crucial logic mistake.

A successful grasp in the libfranka: C++ library for Franka Robotics research robots API requires this condition to be met:

The default tolerance values are:

epsilon_inner = 0.005epsilon_outer = 0.005

However, when deploying a VLA model, the action output is often binary. This means no specific width value is given to the low-level controller. When we command the robot to grasp, the desired width sent to the controller is basically .

But the Franka Hand physically stops at the cube’s width, say 5cm.

Since 5cm is not less than 0.005

the controller flags the grasp as a failure and aborts. The simple solutions? Set epsilon_outer = 0.08 (the maximum width of the gripper). Problem sovled!

Deployment

In this week, I also helped my undergraduate students set up the same teleoperation system I use. I taught them how to use a gamepad to teleoperate the Franka robot to pick up a paper balls and throw them into a trash bin.

I use a simple Action Chunking with Transformer(📄Learning Fine-Grained Bimanual Manipulation with Low-Cost Hardware) model to train on their 50 recorded episodes. Hey, at least the success rate wasn’t zero! : - )

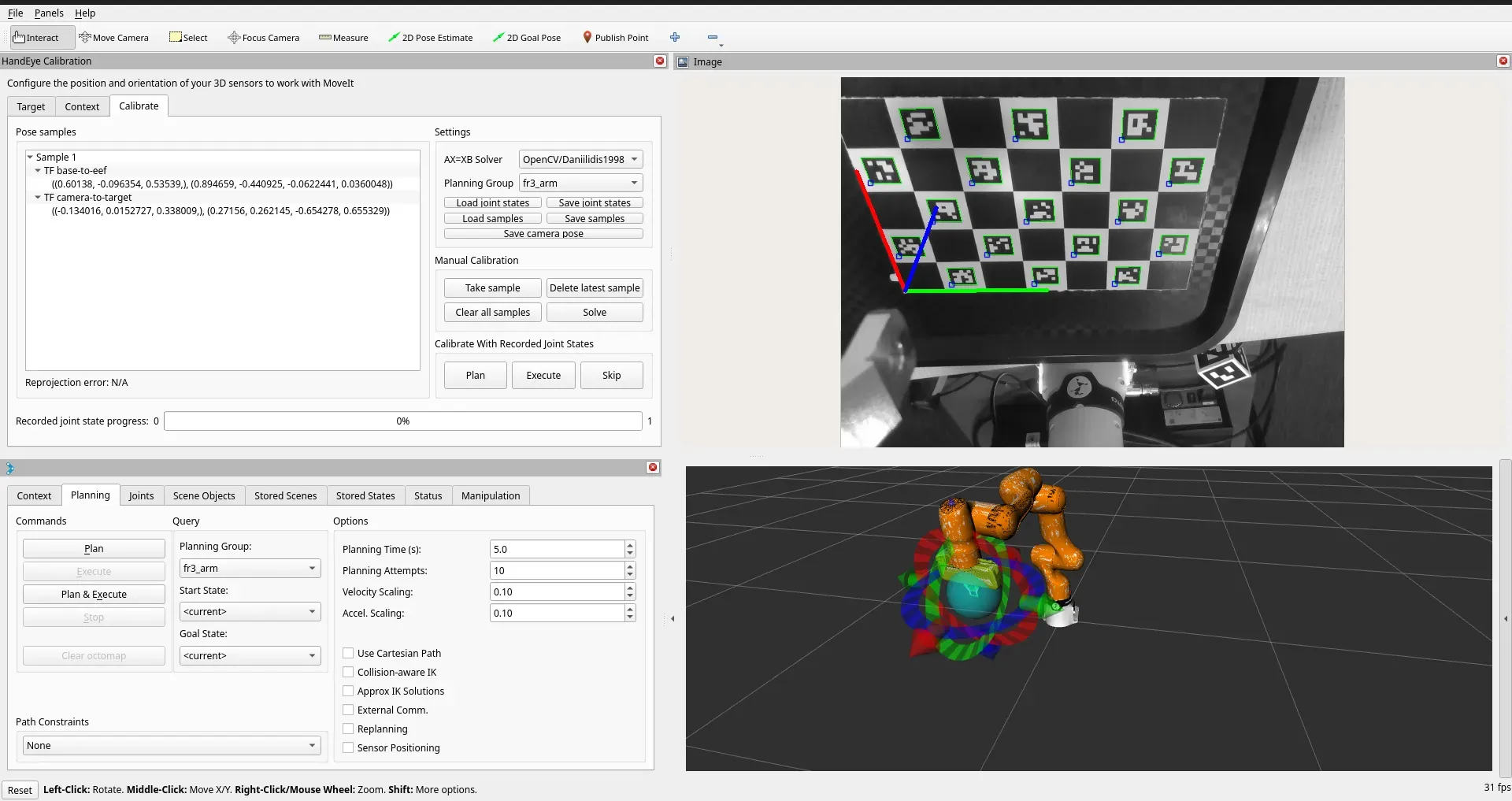

Calibration

The calibration has been haunting me for 2 weeks!

On one hand, I successfully calibrated once, but because I share the robot with my colleagues, the physical setup changed and I need to use another robot.

On the other hand, I initially took a shortcut and used a rather tedious manual process for fast prototyping with IFL-CAMP/easy_handeye: Automated, hardware-independent Hand-Eye Calibration.

Well. I finally said to myself: Man, I am done with this😠. How about I make something self-contained? Something that benefits the open-source community and saves me time in the future?

Yes, I am going to make a full, robust tutorial for it!